How Algorithms Are Shaping Our World

Algorithms are no longer just lines of code buried in software — they’ve become invisible architects of our daily lives. From the videos we watch, to the jobs we apply for, to the validation we crave through likes and shares, algorithms are shaping human behavior in profound ways.

The Rise of Influencer Culture

Social media platforms thrive on engagement, and their algorithms reward content that keeps people scrolling, liking, and sharing. This feedback loop has given birth to influencer culture, where creators tailor their work to please algorithms as much as their audiences. While this has created new career paths and opportunities, it has also fueled burnout among content creators who feel pressured to constantly produce, optimize, and chase the next viral moment.

The Dopamine Economy

Every notification, like, and comment triggers a small release of dopamine in the brain — the same neurotransmitter associated with pleasure and reward. Platforms are designed to exploit this neurological response, keeping users hooked. What feels like casual scrolling is often carefully orchestrated conditioning, training us to crave validation in digital form.

Viewers Under the Algorithm’s Spell

Viewers may think they’re making free choices about what to watch or read, but in reality, algorithms decide what appears in their feeds. Recommendation engines, like those used by YouTube or TikTok, are designed to maximize watch time and engagement — not necessarily quality or accuracy. The result? Filter bubbles, polarization, and the subtle manipulation of attention at a massive scale.

Algorithms at Work: The New Gatekeepers

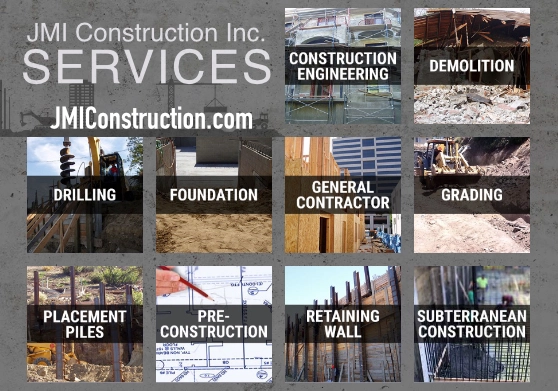

Beyond entertainment, algorithms now decide who gets opportunities in the job market. Applicant Tracking Systems (ATS) filter résumés before a human ever sees them, often eliminating qualified candidates who don’t use the “right” keywords. In this way, invisible code determines career paths, access to resources, and even economic mobility.

The Bigger Picture

Algorithms are powerful tools. They can connect people across continents, surface new opportunities, and make life more efficient. But they also raise urgent questions: Who controls these systems? How transparent should they be? And how do we protect human agency in a world where invisible code is constantly nudging our decisions?

As algorithms become more sophisticated, the challenge is not just technical but ethical. We must ask whether we are shaping the algorithms, or if they are shaping us.

Petra Lugar